TikTok, a video-sharing app known for its popularity among minors, is creating more buzz than Snapchat with 500 million active worldwide users.

But with lack of moderation and insufficient safety controls, live streaming apps like TikTok facilitate a space for sexual grooming by abusers—a reality that landed the app on the National Center on Sexual Exploitation’s Dirty Dozen List for 2020.

Forbes identified TikTok as a “magnet for predators”, and several experts coin TikTok a “hunting ground” that lures child predators and potential sex traffickers.

Related: What You Should Know About Porn And Child Predators On TikTok

Susan McLean, an Australia based cybersecurity expert, advises that TikTok is not safe for children, and that “any app that allows communication can be used by predators”.

TikTok users can create and share short videos with camera effects and music, engaging in trends like dancing and lip-synching to songs, which McLean says is something child predators “like to watch.”

Predators grooming young girls fast on TikTok

Instead of an extended process of manipulation and building trust, grooming can now happen almost instantaneously. In fact, according to some experts, young girls are sharing self-generated sexual content online “within seconds” of going on an app.

The Internet Watch Foundation, an organization that specializes in removing child abuse imagery from the internet, recently took action on more than 37,000 reports containing self-generated images and videos of children on the internet. The IWF warns that girls were victims in 92 percent of the child sexual abuse content they removed, and that 80 percent were of girls between the ages of 11 and 13.

What’s more, a 2019 investigation by The Sun found children as young as 8 years old being targeted and groomed on TikTok.

Related: This Mom Went Undercover On Instagram As An 11-Year-Old Girl To Show How Online Sex Abuse Happens

The Sun reported that “within minutes” of downloading the app, their investigators were bombarded with “deeply disturbing and sexually suggestive comments” from predators who used words like “sexy,” “hot,” and “yummy” to describe videos of young children singing and dancing.

Susie Hargreaves, chief executive of The Internet Watch Foundation, emphasizes similar concerns about how fast the grooming process happens, particularly to young girls.

“Within seconds they will be asked to expose themselves. Perpetrators will say ‘take your top off’ or ‘Let’s see you naked.’ It is scarily easy to get children to do things online. These children clearly do not realize it is an adult coercing and tricking them into doing things…Just because a child is in their bedroom, it does not necessarily mean they are safe.”

Some men secretly record the children and distribute the footage on child sex abuse sites, and in some cases, use the footage to blackmail their underage victims.

Hargreaves suggests that young, naive girls are highly vulnerable to exploitation because often they’re not “emotionally mature” enough to realize what is happening, are easily flattered by perpetrator’s compliments, and are unaware of the potential consequences.

Related: How The Sexualization Of School Girls Is Fueling Child Exploitation

Sarah Smith, a technical projects officer for the IWF, emphasized how common it is for exploitation to occur via apps that can connect children with strangers—especially because online grooming is becoming increasingly “normalized” and children are exposing themselves online for “likes” or to gain popularity.

“The historic idea of grooming being a long-term process has gone. It is more that there is a societal grooming process that takes place, in that these children see this as almost a normalized behavior. It is not a sexual activity for them, but almost part of the trade-off, unfortunately, of getting the popularity on social media.”

Related: Report Reveals One-Third Of Online Child Sex Abuse Images Are Posted By Kids Themselves

Hargreaves describes this as a “national crisis”, emphasizing that self-generated content has risen “exponentially year on year” since 2014.

How TikTok’s updated safety features work

Users allegedly must be 13 to use TikTok, although the age verification process is questionable. Profiles are automatically made public once created, meaning by default, users can be contacted by anyone—like a predator pretending to be someone else.

Related: How To Recognize Online Predators And Protect Yourself When They Slide Into Your DM’s

While there’s clearly still a long way to go when it comes to protecting users, TikTok advises parents to oversee their child’s use of the app, and some improvements were recently made to TikTok’s safety features—including some The National Center on Sexual Exploitation had been advocating for.

Updates include removing an unnecessary 30 day expiration date on Restricted Mode and Screen Management settings put in place by parents, and the introduction of Family Safe Mode and Family Pairing.

For a full explanation of TikTok’s updated safety features, click here.

It’s important to note that these settings aren’t default or able to simply be toggled on and off. Rather, parents have to manually find and implement each one when managing an account for a minor. This is a step in the right direction, but there are clearly safety features TikTok still needs to fix.

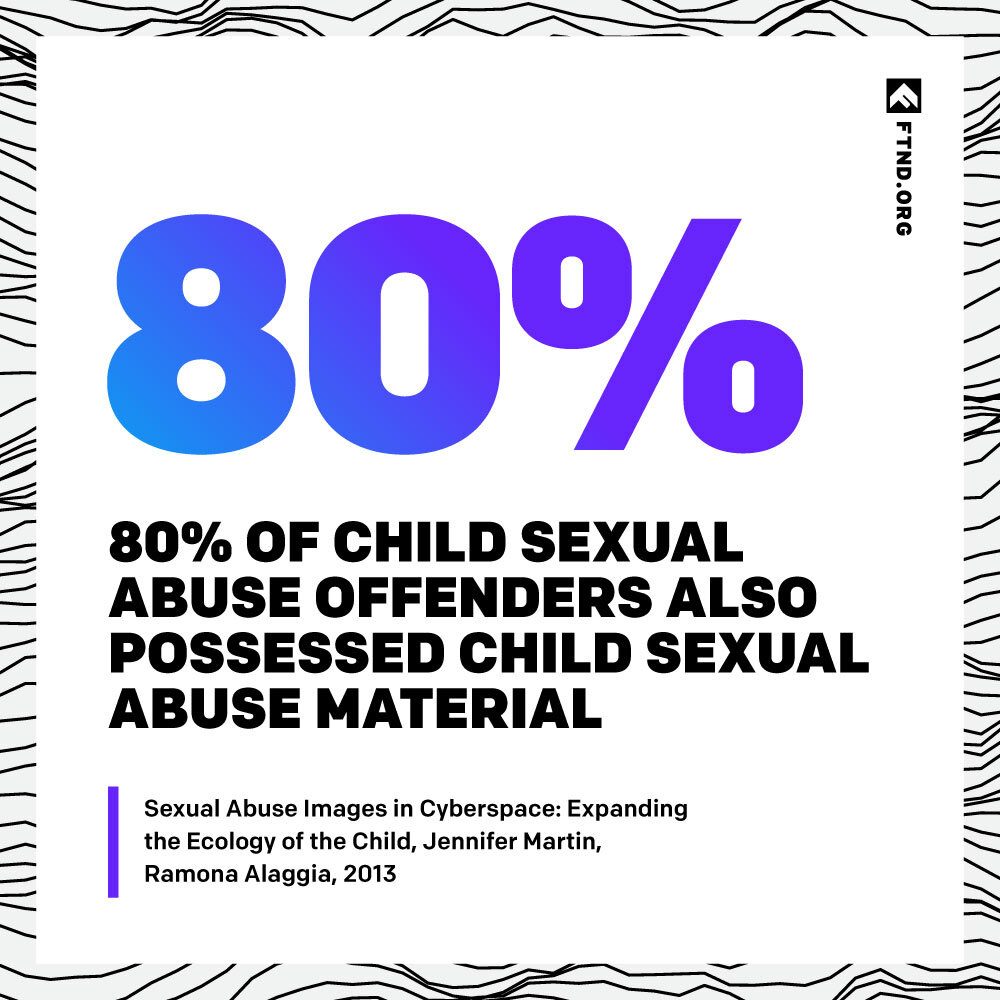

The connection between porn and online predators

So what does porn have to do with all of this? For starters, as an industry that profits off of the sexualization of young girls, porn culture makes this existing issue so much worse. In the porn world, you’re never too young to be sexualized, fantasized, consumed, and distributed online.

Related: 9 Serious Issues Porn Culture Fuels In High Schools

As Pornhub boasts in its annual report, the “teen” porn category has topped the charts for nearly a decade. You heard right—an entire genre dedicated to girls who look (and very well may be) under the age of 18 is vastly popular and deemed acceptable, although in reality, that behavior involving girls below the age of consent for sex would be illegal.

Porn fuels, promotes, and facilitates the fantasy that younger equals sexier. Now consider this—if millions of people across the world are consuming porn involving and depicting underage girls, how could this impact their sexual tastes and expectations?

At the very least, mass consumption of the sexualization of young girls reinforces the idea that this behavior is normal, desirable, sexy, and even solicited.

Porn is the source of sexual education and indoctrination for millions of consumers, so sadly it’s not surprising to see its impact on how society thinks and acts—including the sexualization and exploitation of young girls. But this isn’t the type of world we should accept.

Refuse to accept the norm of teens being treated as sex objects. Fight for a world where young girls feel worth more than “likes” and perceived sex appeal. Learn about the real harms of porn, and share the facts.

Your Support Matters Now More Than Ever

Most kids today are exposed to porn by the age of 12. By the time they’re teenagers, 75% of boys and 70% of girls have already viewed itRobb, M.B., & Mann, S. (2023). Teens and pornography. San Francisco, CA: Common Sense.Copy —often before they’ve had a single healthy conversation about it.

Even more concerning: over half of boys and nearly 40% of girls believe porn is a realistic depiction of sexMartellozzo, E., Monaghan, A., Adler, J. R., Davidson, J., Leyva, R., & Horvath, M. A. H. (2016). “I wasn’t sure it was normal to watch it”: A quantitative and qualitative examination of the impact of online pornography on the values, attitudes, beliefs and behaviours of children and young people. Middlesex University, NSPCC, & Office of the Children’s Commissioner.Copy . And among teens who have seen porn, more than 79% of teens use it to learn how to have sexRobb, M.B., & Mann, S. (2023). Teens and pornography. San Francisco, CA: Common Sense.Copy . That means millions of young people are getting sex ed from violent, degrading content, which becomes their baseline understanding of intimacy. Out of the most popular porn, 33%-88% of videos contain physical aggression and nonconsensual violence-related themesFritz, N., Malic, V., Paul, B., & Zhou, Y. (2020). A descriptive analysis of the types, targets, and relative frequency of aggression in mainstream pornography. Archives of Sexual Behavior, 49(8), 3041-3053. doi:10.1007/s10508-020-01773-0Copy Bridges et al., 2010, “Aggression and Sexual Behavior in Best-Selling Pornography Videos: A Content Analysis,” Violence Against Women.Copy .

From increasing rates of loneliness, depression, and self-doubt, to distorted views of sex, reduced relationship satisfaction, and riskier sexual behavior among teens, porn is impacting individuals, relationships, and society worldwideFight the New Drug. (2024, May). Get the Facts (Series of web articles). Fight the New Drug.Copy .

This is why Fight the New Drug exists—but we can’t do it without you.

Your donation directly fuels the creation of new educational resources, including our awareness-raising videos, podcasts, research-driven articles, engaging school presentations, and digital tools that reach youth where they are: online and in school. It equips individuals, parents, educators, and youth with trustworthy resources to start the conversation.

Will you join us? We’re grateful for whatever you can give—but a recurring donation makes the biggest difference. Every dollar directly supports our vital work, and every individual we reach decreases sexual exploitation. Let’s fight for real love: