In a recent investigative op-ed for The New York Times, journalist Nicholas Kristof recounted the tragic experience of a 16-year-old girl in Perth, Australia. This teen Snapchatted a nude photo of herself to her boyfriend at the time, with the message, “I love you. I trust you.”

Without consent, the boyfriend took a screenshot of the Snap and shared it with five of his friends, who then shared it with 47 other friends. Before long, more than 200 students at the teen’s school had seen her image. One person uploaded it to a porn site with her name and school.

The teen stopped attending school and self-medicated with drugs. Her family moved to a different city and then a different state, but felt she could not escape. At 21-years-old, she died by suicide.

The harmful effects of nonconsensually sharing private sexual images, also known as image-based sexual abuse (IBSA), are serious, and the devastation victims experience is made exponentially worse if their images or videos are uploaded to porn sites.

This is why XVideos is the latest tube site under fire for reportedly hosting this abusive content.

A quick timeline of events

In December 2020, Nicholas Kristof wrote a different op-ed that went viral. It exposed Pornhub for reportedly hosting and profiting from nonconsensual content, or IBSA, and child sexual abuse material (CSAM), as well as criticized the porn company for their reported poor treatment of victims who appealed to the website to remove abusive images.

In response to these allegations, Pornhub announced a series of improvements, including removing the download feature and only permitting verified users to upload content. Yet, it wasn’t enough to keep payment services like Mastercard, Discover, and Visa who independently verified the existence of illicit content on Pornhub and severed ties with the company.

At the start of 2021, the Canadian government opened an inquiry into Pornhub’s dealings and reviewed its parent company, MindGeek. The hearings included testimony from the porn company’s executives and from victims whose lives have been dramatically altered by videos of their abuse being nonconsensually shared and promoted on Pornhub.

The outcome of these discussions is still unclear, but one thing we know for sure is that our culture cannot go back to pretending we are unaware of the kind of content on porn sites. Nonconsensual content on porn tube sites that rely on user-generated material has been proven to be common, accessible, and devastating to those who are victimized.

While Kristof’s article garnered a lot of outrage and attention about Pornhub and MindGeek, one pornographer said to Kristof that his reporting was a gift to Pornhub’s competitor—that the journalist was like “Santa Claus” to XVideos. That has proven to be true, so far.

When Pornhub deleted 10 million videos from its site that were uploaded by unverified users, many upset porn consumers flocked to its rival site, XVideos—a site with much fewer scruples than Pornhub, and seemingly less oversight.

What we know about XVideos

The issue of nonconsensual content and CSAM online is much bigger than Pornhub. It exists on other porn sites, social media platforms, and just about any site that allows users to upload content.

XVideos and Pornhub are free adult tube sites that have competed for years for the top spot in the porn industry. Currently, XVideos ranks as the number one porn site in the world, and the seventh most visited website on the internet with its sister site XNXX.com close behind at number nine. Since the Pornhub controversy, that site has tumbled out of the top ten to number 13.

This is part of the reason why Kristof’s reporting was described as a “gift” to XVideos. As Pornhub has been under fire, consumers have migrated from Pornhub to XVideos where there are very few restrictions on content.

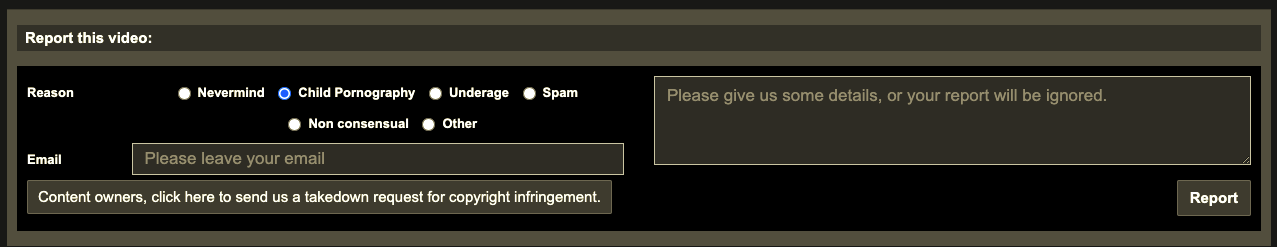

The website guides consumers to videos that claim to be of children. It was reported that searching for “young” returned similar suggestions including “tiny,” “girl,” “boy,” “jovencita,” and “youth porn.” And if that wasn’t concerning enough, the page where CSAM can be reported on XVideos says that if there are no details included with the report, it “will be ignored.”

Why would they ignore even a single report of CSAM, details included or not? Are there so many CSAM reports they have to go through that they have to discard some for lack of detail? Does this seem to be a reporting system from a site that takes claims of CSAM as seriously as it should?

XVideos content report page, April 2021

Earlier this year, Czech authorities announced an investigation into XVideos, which is based in the Czech Republic, after the allegations that the company was enabling and allowing users to upload and share IBSA, nonconsensual content, and CSAM. Under this pressure, XVideos has removed some underage search terms, but even searching for “twelve” suggested other terms including “elementary” and “training bra.”

Related: What’s Going On With Pornhub? A Simplified Timeline Of Events

These efforts at making the site safer from abusive content are hardly comprehensive. Just recently, advocates have found reportedly clear examples of CSAM and nonconsensual content on the site without having to search very thoroughly.

Examples of real porn titles on the site include references to “getting f—ed awake,” “passed out” wives, and 18-year-olds taking advantage of school children. Some of these types of videos look staged, but many look very real.

The role of payment services and search engines

Going forward, Kristof suggests a couple of steps. He called on credit card companies and payment services to abandon XVideos in a similar way they cut ties with Pornhub. After the publication of his op-ed, PayPal contacted Kristof to announce they will no longer be available to purchase advertising on XVideos or their sister sites.

The second point is perhaps more challenging to fix. XVideos and Pornhub rely on search engines to drive traffic to their sites. Kristof called out Google in particular, but also Yahoo and Bing, for enabling the abusive content on sites like XVideos. If such a claim is making you raise your eyebrows, let us explain.

When consumers are looking for porn, a common place to start is Google. Searching for an explicit term on either of the major search engines returns links to Pornhub and XVideos who are constantly vying for the top listing. This is also correct for search terms that are suggestive of underage or nonconsensual material. For example, in Kristof’s research, he typed a series of terms into Google, including “schoolgirl” and “rape unconscious girl” which returned links to XVideos advertising these types of videos.

But wait, if people go looking for something, they’ll eventually find it…right? Does Google really have the capability to redirect people away from searches yielding nonconsensual content and CSAM? Yes, they do.

To prove this, Kristof googled a few things, one of which was “how do I commit suicide?” and the top results returned suicide prevention hotlines. If that simple redirection is possible for suicide or searching for ways to “poison my husband,” why not for illicit content?

Why this matters

Not all content on XVideos is actual IBSA or CSAM.

Many of the links Google returns for a search term like “schoolgirl” will likely be of porn performers who mimic these so called “genres,” but this blend of professional videos mixed with nonconsensual and abusive content makes it even more challenging for consumers to tell the difference between the two.

Related: MindGeek, Pornhub’s Parent Company, Sued For Reportedly Hosting Videos Of Child Sex Trafficking

Perhaps another question we should be asking is, how did such abusive scenarios become porn genres in the first place?

A study published this year found that one in eight video titles on three major porn tube sites home pages alone (XVideos, Pornhub, and XHamster) described sexual violence or nonconsensual conduct. Many more of the videos themselves showed violence. Videos portrayed women caught on spy cams in changing rooms, unconscious women being raped, and some even depict children or adults trying to fight back against an assault.

The report also found the most common form of sexual violence shown was between family members, and frequent terms used to describe the videos included “abuse,” “annihilation,” and “attack.” The researchers conclude that these sites are “likely hosting” unlawful material.

Also, another study analyzing the acts portrayed in porn videos suggests that as little as 35.0% and as much as 88.2% of popular porn scenes contain violence or aggression, and that women are the targets of violence approximately 97% of the time.

Related: How To Report Child Sexual Abuse Material If You Or Someone You Know Sees It Online

These findings combined with hearing victim experiences and reading the explicit search terms are all difficult to digest, but we keep covering this issue of abusive and exploitative content on porn sites for greater awareness that, in turn, reduces the demand that enables the perpetration of sexual violence and exploitation.

There is still a long way to go from stopping online exploitation including IBSA and CSAM. Videos that show “7th grader” or “unconscious girl” are not sexual fantasy and entertainment, they are exploitative. Exploitation, rape, sexual assault, and sex abuse are not sexy.

To learn more about how even exploitation-free porn is not harm-free to consumers, click here.

Your Support Matters Now More Than Ever

Most kids today are exposed to porn by the age of 12. By the time they’re teenagers, 75% of boys and 70% of girls have already viewed itRobb, M.B., & Mann, S. (2023). Teens and pornography. San Francisco, CA: Common Sense.Copy —often before they’ve had a single healthy conversation about it.

Even more concerning: over half of boys and nearly 40% of girls believe porn is a realistic depiction of sexMartellozzo, E., Monaghan, A., Adler, J. R., Davidson, J., Leyva, R., & Horvath, M. A. H. (2016). “I wasn’t sure it was normal to watch it”: A quantitative and qualitative examination of the impact of online pornography on the values, attitudes, beliefs and behaviours of children and young people. Middlesex University, NSPCC, & Office of the Children’s Commissioner.Copy . And among teens who have seen porn, more than 79% of teens use it to learn how to have sexRobb, M.B., & Mann, S. (2023). Teens and pornography. San Francisco, CA: Common Sense.Copy . That means millions of young people are getting sex ed from violent, degrading content, which becomes their baseline understanding of intimacy. Out of the most popular porn, 33%-88% of videos contain physical aggression and nonconsensual violence-related themesFritz, N., Malic, V., Paul, B., & Zhou, Y. (2020). A descriptive analysis of the types, targets, and relative frequency of aggression in mainstream pornography. Archives of Sexual Behavior, 49(8), 3041-3053. doi:10.1007/s10508-020-01773-0Copy Bridges et al., 2010, “Aggression and Sexual Behavior in Best-Selling Pornography Videos: A Content Analysis,” Violence Against Women.Copy .

From increasing rates of loneliness, depression, and self-doubt, to distorted views of sex, reduced relationship satisfaction, and riskier sexual behavior among teens, porn is impacting individuals, relationships, and society worldwideFight the New Drug. (2024, May). Get the Facts (Series of web articles). Fight the New Drug.Copy .

This is why Fight the New Drug exists—but we can’t do it without you.

Your donation directly fuels the creation of new educational resources, including our awareness-raising videos, podcasts, research-driven articles, engaging school presentations, and digital tools that reach youth where they are: online and in school. It equips individuals, parents, educators, and youth with trustworthy resources to start the conversation.

Will you join us? We’re grateful for whatever you can give—but a recurring donation makes the biggest difference. Every dollar directly supports our vital work, and every individual we reach decreases sexual exploitation. Let’s fight for real love: