Since 2020, the public has been informed—with some controversial response—that Apple is automatically screening, encrypting, and flagging images on personal devices to help find and report child sexual abuse material (CSAM), commonly known as “child porn.”

But just a few weeks ago, the tech giant and household name announced three new security features coming later this year that take measures to actually intervene when CSAM is found on a device.

Apple says the purpose of this new CSAM detection technology—NeuralHash—is to limit the spread of CSAM and help protect children from predators who use mobile devices as a tool to recruit and exploit them.

Despite Apple’s reassurance of consumer privacy, the announcement has been met with resistance from some privacy advocates and praise from parents and child safety experts.

Related: Apple Fights Child Abuse Images By Scanning Users’ Uploaded iCloud Photos

Let’s take a look at what these new features entail, and how it could impact those in the U.S. who own an Apple device.

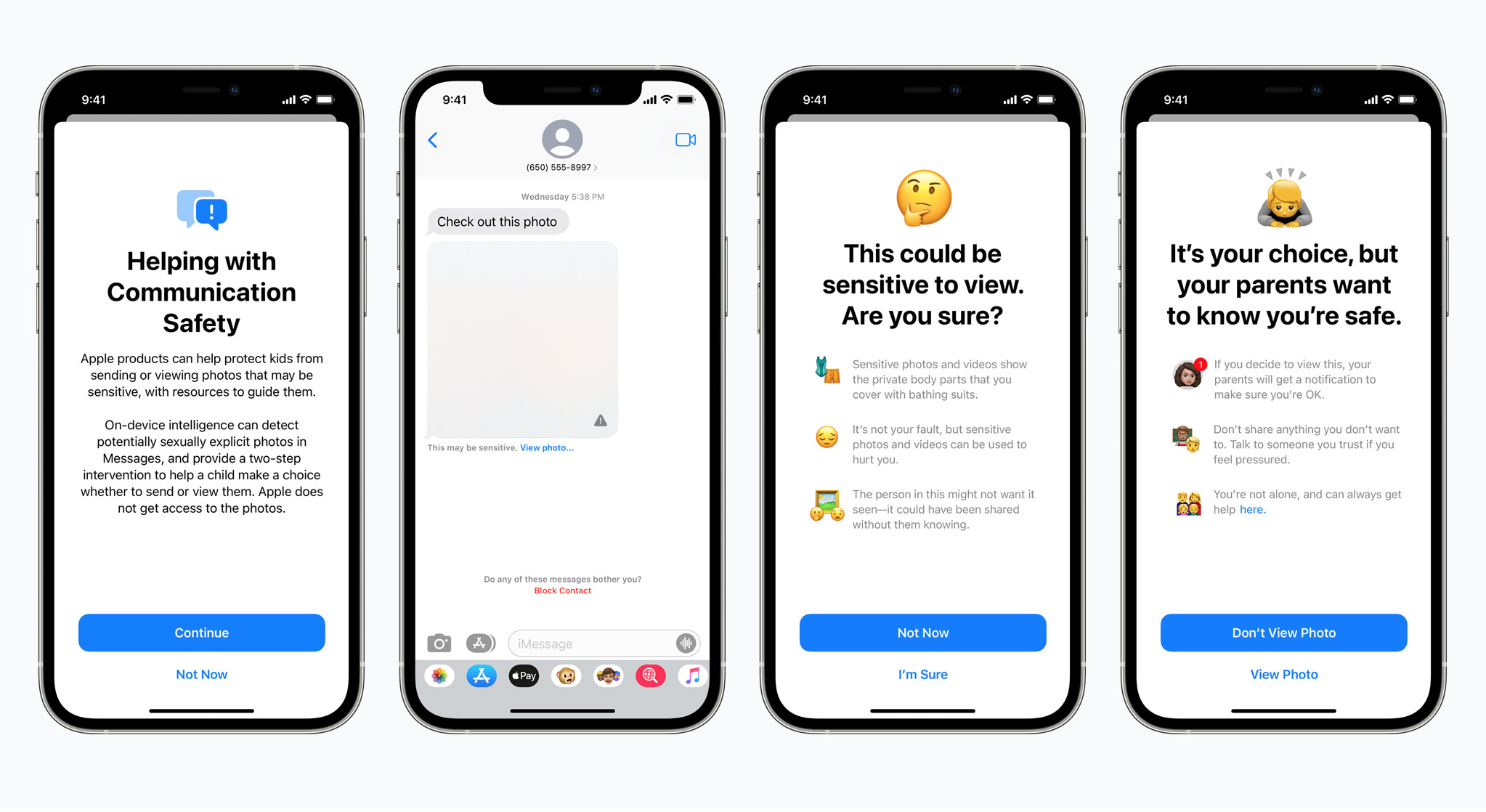

1. Communication safety features in iMessage

The Messages app will use new on-device machine learning to warn kids and their parents when sending or receiving sexually explicit photos—without granting Apple access to the private messages.

The goal is to help guide kids to make informed decisions before sending or receiving explicit content. It’s also meant to protect children from sexual predators by interrupting communications before they happen and offering advice and resources.

A large amount of CSAM is actually self-generated, meaning explicit images are created by minors themselves—commonly known as “sharing nudes.” This new feature is designed to warn minors about engaging in this behavior, too.

Related: How This Microsoft Project Is Helping To Fight Online Child Exploitation

Basically, how it works is, if this type of content is received via Message, the photo is blurred and the underage user will be warned of the content, provided with resources, and reassured it’s not their fault and it’s ok if they choose not to view the photo. The child will also be told that their parents care about their safety and will be sent a message if they do choose to view the photo.

Similar protections are in place if a child attempts to send a sexually explicit photo to someone—a warning is given, and parents can receive a message if the photo is sent.

2. CSAM detection on Apple devices

Child Sexual Abuse Material (CSAM) includes any content that depicts sexually explicit activities involving a child—meaning a person under 18 years of age. The spread of CSAM is rampant, with 21.8 million tips made to the National Center for Missing & Exploited Children (NCMEC) in 2020 alone.

Related: Reports Of CSAM Increased In 2020—Why Was So Much Of It Created By Minors Themselves?

To help address this issue, NeuralHash detects CSAM stored in iCloud Photos then reports the instances to NCMEC—a national center for reporting CSAM that works closely with law enforcement agencies throughout the United States.

A hash is created by feeding a photo into a “hashing function.” What comes out the other side is a unique digital fingerprint that looks like a jumble of letters and numbers. And the best part is you can’t turn the “hash” back into the photo, but the same photo, or identical copies of it, will always create the same hash.

Here’s what a hash looks like:

48008908c31b9c8f8ba6bf2a4a283f29c15309b1

Apple assures users that its CSAM detection technology is mindful of privacy. Images aren’t scanned in the cloud, but rather through on-device matching that uses a database of known CSAM image hashes provided to Apple by NCMEC and other child safety organizations.

Before being stored in iCloud Photos, the detected content is saved on the user’s device as an unreadable set of hashes where it can be matched with the known CSAM hashes. This is a key clarification—Apple says its process is even more mindful of privacy by only scanning files in the cloud for known (meaning existing) child abuse imagery—not new content.

Related: Uncovering The Child Exploitation Image Distribution Epidemic On WhatsApp

NeuralHash also works to ensure that identical or very similar content, like cropped or edited images, result in the same hash.

This matching process runs off of cryptographic technology that determines if there’s a match without actually revealing the content itself. The match is encoded along with other encrypted data about the image and saved as a voucher that’s uploaded to iCloud photos with the image.

The actual content of the vouchers can’t be interpreted by Apple unless the iCloud Photos account crosses a threshold of known CSAM content. Apple then manually reviews each report to confirm the match, disables the account, and reports the CSAM to NCMEC.

Apple assures that the threshold is extremely accurate, with a less than one in one trillion chance each year of incorrectly flagging an account. Still, Apple provides for an appeal process if a user feels their account has been disabled by mistake.

Related: Want To Help Fight Child Sex Trafficking? Know These 9 Acronyms And 5 Facts

It’s important to keep in mind that scanning user content is no new thing. Most cloud services like Dropbox, Microsoft, and Google already scan user files for content that violates their terms of service or may be illegal, like CSAM.

Apple, on the other hand, has given users the option to encrypt their data before it ever reaches Apple’s iCloud servers. And its new NeuralHash CSAM detection technology still works to protect cloud privacy by working on a user’s device—identifying if a user uploads known CSAM to iCloud but not decrypting the images until a threshold is met and a series of checks are completed.

3. Added guidance in Siri and Search

Apple is also expanding resources regarding CSAM to Siri and Search features.

For example, if a user asks Siri or conducts a search for how to report CSAM or child exploitation, they’ll be directed to resources about how and where to file a report.

Siri and Search will also be able to give warnings if a user makes a search related to CSAM. The alerts explain to users that these topics are harmful, and directs them to partner resources to get help.

Public response to Apple’s new features

These new features are coming later this year in updates to iOS 15, iPadOS 15, watchOS 8, and macOS Monterey. NeuralHash will first roll out in the U.S., but Apple hasn’t announced if or when it will be implemented internationally.

Despite the general acceptance of efforts to combat child sexual abuse and exploitation, Apple’s new child safety features have been met with criticism by some cryptographers, security experts, and privacy advocates. Many people are used to Apple’s more hands off approach in regards to content regulation, and find these new surveillance measures unsettling.

Related: How To Report Child Exploitation Images If You Or Someone You Know Sees It Online

Apple has attempted to calm fears with the reassurance that user privacy is still protected through multiple layers of encryption, and that hashes aren’t converted into the content itself and placed in Apple’s hands for final manual review until multiple steps are taken and a threshold of known CSAM is met.

Apple also addressed concerns about users being flooded with unwanted CSAM in an effort to get their accounts flagged or disabled. Apple emphasized the step of a manual review prior to an account being disabled and assured steps will be taken to watch for evidence of misuse.

On the flip side, Apple’s NeuralHash technology has been reviewed and praised by child protection organizations and many cryptography experts. Many parents are eager for the new features to help them have a more active, informed role in what their kids navigate online.

Only time will tell the impact of Apple’s new child safety features. But this increased awareness, conversation, and accountability about CSAM—or child pornography, as it’s commonly known—could help save exploited children and prevent exploitation.

To report an incident involving the possession, distribution, receipt, or production of child pornography, file a report on the National Center for Missing & Exploited Children (NCMEC)’s website at www.cybertipline.com, or call 1-800-843-5678.

Your Support Matters Now More Than Ever

Most kids today are exposed to porn by the age of 12. By the time they’re teenagers, 75% of boys and 70% of girls have already viewed itRobb, M.B., & Mann, S. (2023). Teens and pornography. San Francisco, CA: Common Sense.Copy —often before they’ve had a single healthy conversation about it.

Even more concerning: over half of boys and nearly 40% of girls believe porn is a realistic depiction of sexMartellozzo, E., Monaghan, A., Adler, J. R., Davidson, J., Leyva, R., & Horvath, M. A. H. (2016). “I wasn’t sure it was normal to watch it”: A quantitative and qualitative examination of the impact of online pornography on the values, attitudes, beliefs and behaviours of children and young people. Middlesex University, NSPCC, & Office of the Children’s Commissioner.Copy . And among teens who have seen porn, more than 79% of teens use it to learn how to have sexRobb, M.B., & Mann, S. (2023). Teens and pornography. San Francisco, CA: Common Sense.Copy . That means millions of young people are getting sex ed from violent, degrading content, which becomes their baseline understanding of intimacy. Out of the most popular porn, 33%-88% of videos contain physical aggression and nonconsensual violence-related themesFritz, N., Malic, V., Paul, B., & Zhou, Y. (2020). A descriptive analysis of the types, targets, and relative frequency of aggression in mainstream pornography. Archives of Sexual Behavior, 49(8), 3041-3053. doi:10.1007/s10508-020-01773-0Copy Bridges et al., 2010, “Aggression and Sexual Behavior in Best-Selling Pornography Videos: A Content Analysis,” Violence Against Women.Copy .

From increasing rates of loneliness, depression, and self-doubt, to distorted views of sex, reduced relationship satisfaction, and riskier sexual behavior among teens, porn is impacting individuals, relationships, and society worldwideFight the New Drug. (2024, May). Get the Facts (Series of web articles). Fight the New Drug.Copy .

This is why Fight the New Drug exists—but we can’t do it without you.

Your donation directly fuels the creation of new educational resources, including our awareness-raising videos, podcasts, research-driven articles, engaging school presentations, and digital tools that reach youth where they are: online and in school. It equips individuals, parents, educators, and youth with trustworthy resources to start the conversation.

Will you join us? We’re grateful for whatever you can give—but a recurring donation makes the biggest difference. Every dollar directly supports our vital work, and every individual we reach decreases sexual exploitation. Let’s fight for real love: