Child exploitation happens way more often than you might expect, both in the likeliest and unlikeliest of places.

A recent investigation by the British news organization BBC into the popular live video chat website Omegle has shown the site to be a catalyst for the increasing online self-generated child sexual abuse material (CSAM, otherwise known as “child porn”) epidemic.

Omegle is a free online chat and video website that randomly pairs users in one-on-one chat sessions where they can chat anonymously. While the idea that led to Omegle’s creation, that the internet is full of cool people that everyone should be able to connect with, is fun in concept, the website has been less than “fun” in practice.

Global child protection groups are becoming increasingly concerned that predators are using the site to gather self-generated CSAM. The website’s growth over the course of the last year, from about 34 million visits a month in January 2020 to 65 million in January 2021, has only served to magnify the concerns.

Let’s get the facts.

What is self-generated CSAM?

The CSAM part is self-explanatory: it’s imagery or videos which show a person who is a child engaged in or is depicted as being engaged in explicit sexual activity. The self-generated piece, on the other hand, refers to the fact that there is no obvious abuser in the child abuse image or video.

On Omegle, what this means is that predators are remotely recording videos of children performing explicit sexual acts. Then, these predators are creating and distributing catalogs of the material online. And that’s the case even though Omegle’s founder, Leif Brooks, told the BBC that his site had increased moderation efforts in recent months.

The Internet Watch Foundation (IWF), an organization responsible for finding and removing images and videos of child sexual abuse online, said analysts created 68,000 reports of self-generated CSAM content in 2020, a 77% increase over 2019.

Related: How Online Predators Coerce Minors To Send Them Explicit Photos & Videos

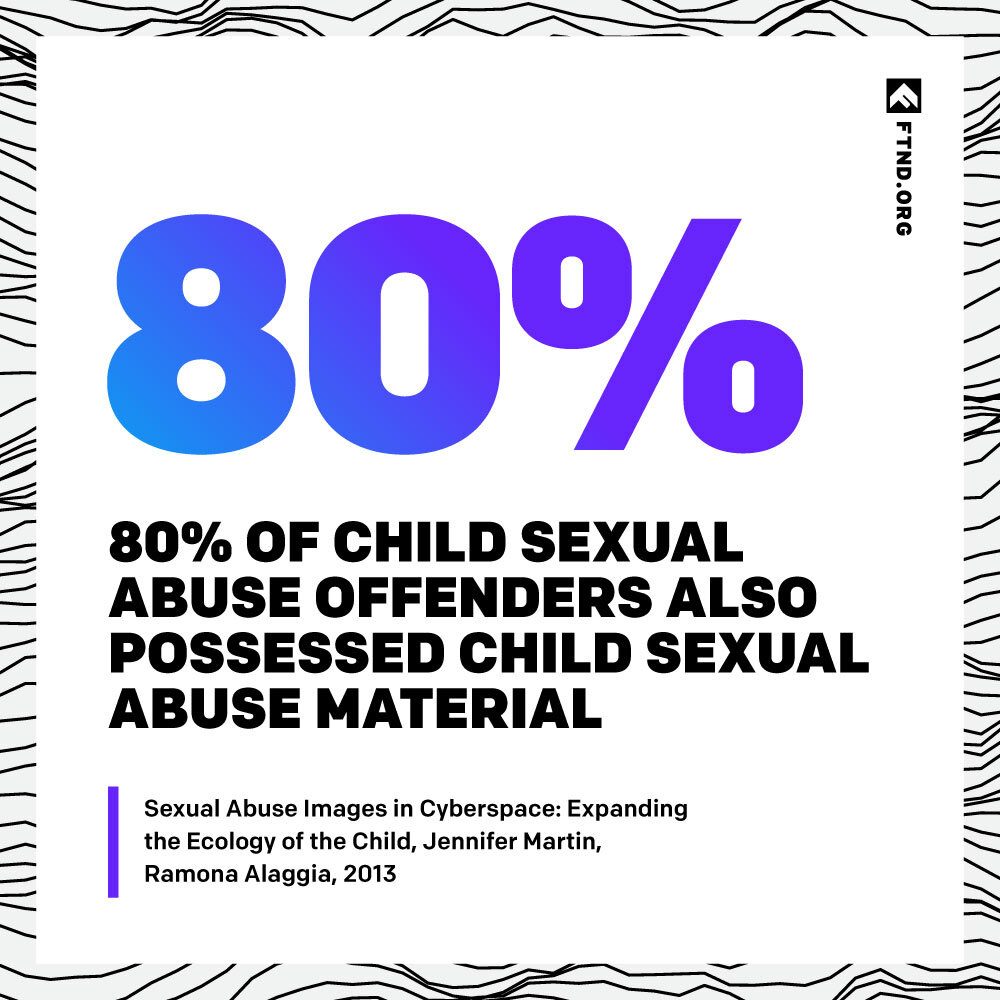

These numbers were also backed by a 2018 report from NetClean, an organization that creates technology that enables ethical businesses to disrupt the spread of CSAM. NetClean found that 90% of police officers investigating online CSAM said it was common or very common to find self-generated sexual content during their investigations.

According to Chris Hughes, the hotline director at the IWF, some of that is definitely from Omegle. Hughes’ foundation has “found self-generated abuse material… on the internet which has been created by predators who have captured and distributed footage from Omegle.”

How are predators using Omegle to create self-generated CSAM?

Some predators attempt to coerce their prospective victims over the live video chat the website helps facilitate.

In the case of one eight-year-old girl who was nearly coerced into sexual activity with an older man on the website. Her mother told the BBC that her daughter explored the site because she had “seen some videos go viral on TikTok about people being on this Omegle.”

The mother went on: people on the site “were saying she was beautiful, hot, sexy. She told them was only eight years old and they were okay with that. She witnessed a man masturbating and another man wanted to play truth or dare with her. He was asking her to shake her bum, take off her top and trousers.”

Other predators simply hope to get lucky by starting chats with younger children who are engaging in sexually explicit behaviors of their own accord.

When the BBC monitored Omegle for approximately 10 hours, they were paired with dozens of under-18s (which is supposedly against Omegle’s rules, although Omegle doesn’t seem to have an age verification process in place). Some even appeared as young as seven or eight.

Two of the under-18s appeared to be young prepubescent boys exposing themselves live on the video chat. One of them identified himself as being 14 years old. This fact exhibits a clear problem, and that’s not even mentioning the 12 fully exposed men, eight naked males, and seven porn advertisements the BBC was connected at random with over one two-hour period.

Hughes of the IWF said, “Some of the videos we’ve seen show individuals self-penetrating on webcam, and this type of activity is going on in a household setting often where we know parents are present. There are conversations that you can hear, even children being asked to come down for [dinner].”

The BBC’s investigation begs the question: what’s being done about all this, and how does porn culture normalize these very risky and abusive sexual encounters minors have with strangers?

The public response to the BBC’s investigation

TikTok, whose videos tagged with “Omegle” have been viewed more than 9.4 billion times, told the BBC that the news organization’s investigation led it to ban sharing links to Omegle. And, since late August of 2020, many schools, police forces, and government agencies have issued warnings about the site in the United States, United Kingdom, Norway, France, Canada, and Australia.

Julian Knight, the chairman of the House of Commons (the House of Commons is a part of the United Kingdom’s government and is similar to the United States’ legislative branch) Digital, Culture, Media and Sports Select Committee, said Omegle’s problems exhibit a need for greater legislation: “What we need to do is have a series of fines and even potentially business interruption if necessary, which would involve the blocking of websites which offer no protection at all to children.”

Why this matters

It’s important for individuals everywhere to be aware of the fact that Omegle and other sites like it aren’t only fun video chat providers of harmless entertainment. With a relaxed moderation system and no age verification system in place, Omegle is being used by predators to create and proliferate self-generated CSAM.

But we also believe it’s important to recognize that, if sites not directly related to porn are able to create and proliferate this kind of content (even if it’s by accident), how much more so will that be the case for actual porn sites?

Related: How To Report Child Sexual Abuse Material If You Or Someone You Know Sees It Online

Both PornHub and Xvideos have reportedly been caught hosting and profiting off of illicit content like CSAM, and yet they also brand themselves as providers of harmless entertainment.

Knowing where and how child abuse happens online is one of the first steps to eradicating it completely. In our porn-obsessed culture, it isn’t surprising that even those who are under 18 years old don’t comprehend the risks and abusive nature of underage nudity online if their primary educator of sex has been porn.

This is why we exist, to shed light on porn’s harms and recognize how it contributes to a culture that is complicit with child abuse and child exploitation.

To report an incident involving the possession, distribution, receipt, or production of child sexual abuse material, file a report on the National Center for Missing & Exploited Children (NCMEC)’s website at www.cybertipline.com, or call 1-800-843-5678.

Your Support Matters Now More Than Ever

Most kids today are exposed to porn by the age of 12. By the time they’re teenagers, 75% of boys and 70% of girls have already viewed itRobb, M.B., & Mann, S. (2023). Teens and pornography. San Francisco, CA: Common Sense.Copy —often before they’ve had a single healthy conversation about it.

Even more concerning: over half of boys and nearly 40% of girls believe porn is a realistic depiction of sexMartellozzo, E., Monaghan, A., Adler, J. R., Davidson, J., Leyva, R., & Horvath, M. A. H. (2016). “I wasn’t sure it was normal to watch it”: A quantitative and qualitative examination of the impact of online pornography on the values, attitudes, beliefs and behaviours of children and young people. Middlesex University, NSPCC, & Office of the Children’s Commissioner.Copy . And among teens who have seen porn, more than 79% of teens use it to learn how to have sexRobb, M.B., & Mann, S. (2023). Teens and pornography. San Francisco, CA: Common Sense.Copy . That means millions of young people are getting sex ed from violent, degrading content, which becomes their baseline understanding of intimacy. Out of the most popular porn, 33%-88% of videos contain physical aggression and nonconsensual violence-related themesFritz, N., Malic, V., Paul, B., & Zhou, Y. (2020). A descriptive analysis of the types, targets, and relative frequency of aggression in mainstream pornography. Archives of Sexual Behavior, 49(8), 3041-3053. doi:10.1007/s10508-020-01773-0Copy Bridges et al., 2010, “Aggression and Sexual Behavior in Best-Selling Pornography Videos: A Content Analysis,” Violence Against Women.Copy .

From increasing rates of loneliness, depression, and self-doubt, to distorted views of sex, reduced relationship satisfaction, and riskier sexual behavior among teens, porn is impacting individuals, relationships, and society worldwideFight the New Drug. (2024, May). Get the Facts (Series of web articles). Fight the New Drug.Copy .

This is why Fight the New Drug exists—but we can’t do it without you.

Your donation directly fuels the creation of new educational resources, including our awareness-raising videos, podcasts, research-driven articles, engaging school presentations, and digital tools that reach youth where they are: online and in school. It equips individuals, parents, educators, and youth with trustworthy resources to start the conversation.

Will you join us? We’re grateful for whatever you can give—but a recurring donation makes the biggest difference. Every dollar directly supports our vital work, and every individual we reach decreases sexual exploitation. Let’s fight for real love: