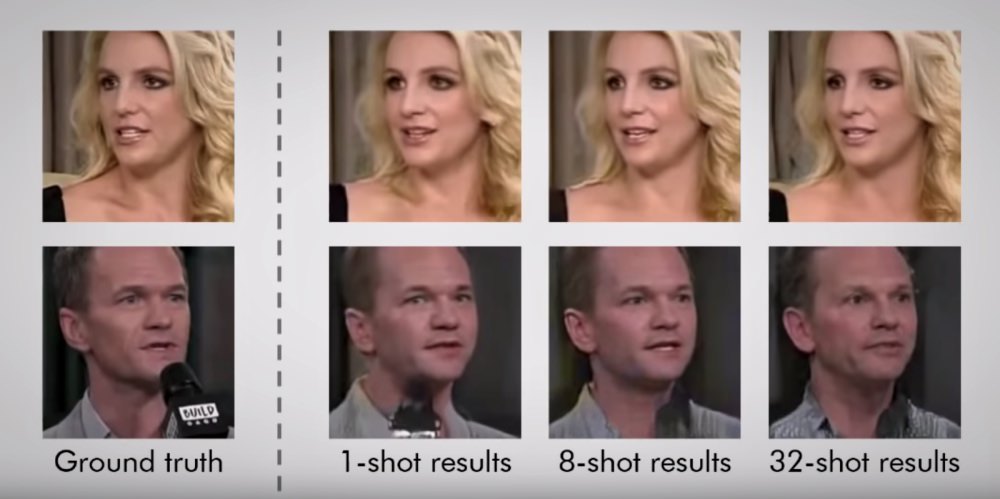

Cover photo screenshot from YouTube/Egor Zakharov. 5-minute read.

It used to require a lot of time, money, and skill to create convincing deepfake videos—but not anymore.

Realistic looking video renderings are no longer exclusive to high-budget sci-fi movies. In fact, recent advances in artificial intelligence make doctoring and creating fake footage easier than ever.

In fact, all you need is one picture.

New study on deepfake tech

The experts at Samsung’s AI Center in Moscow recently teamed up with the Skolkovo Institute of Science and Technology to develop a research paper that demonstrates how fake videos can be generated using just one image—and the implications of where this tech could lead are concerning, to say the least.

Related: Deepfakes: A Newer, Scarier Genre Of “Smart” Online Porn

Researcher Egor Zakharov published a video to demonstrate just how easy it is for a computer to take as little as one or a small handful of images to transform them into what he calls a “talking head model.”

“Effectively, the learned model serves as a realistic avatar of a person,” Zakharov shares in the video.

Zakharov notes that adding more images helps create models of “higher realism and better identity preservation.” However, his examples of fake talking footage created from just a single image of a person are surprisingly and eerily convincing.

Near the end of the video, Zakharov even creates living portraits using a single piece of art—like the Mona Lisa springing to life, appearing as an actual moving character.

It would be cool if this kind of technology weren’t being used for such horrifying things.

Where advances in deepfake technology could lead

USA Today recently shared, “Crafting realistic humanoids in video games or CGI movies used to take years of training, hundreds of people, and millions of dollars. But that’s just not the case anymore. Today, with just a little facial mapping and powerful artificial intelligence, these sophisticated machine-learning techniques are becoming accessible to people who don’t necessarily have massive moviemaking budgets.

And as deepfakes spread across online platforms, many have crept onto the darker parts of the internet. Famous singers and actors have been victims to pornographic deepfakes… Deepfakes could very well be used against the U.S. by hostile actors. Elections, political campaigns, and national security could all be at risk.”

Related: Why It’s Sexual Exploitation When Celebrities’ Private Images Are Hacked And Shared

National security expert Andy Grotta added, “You can sort of see a trajectory develop where, within a year or two, it’s going to be really hard for a person to distinguish between a real video and a fake video.”

And according to Hany Farid, professor of computer science at Dartmouth College, “Until recently, we have largely been able to trust audio and video recordings,” but advances in machine learning have democratized—or made accessible to everyone—tools for creating sophisticated, compelling fake footage.

It doesn’t take a far stretch of the imagination to see the impact this tech could have on individual lives and society as a whole.

Imagine a world where discerning between what’s real and what’s fake is virtually impossible, and where explicit content of any person can be generated on-demand and distributed to the masses.

Now consider the fact that this reality is already well underway.

The growing demand for AI and deepfakes

The demand for AI content is only growing.

Take, for example, this computer-generated Instagram influencer who interacts with her 1.6 million followers. She’s fake and her followers know it, but they continue to like, comment, and DM as if she’s a real person. Or this Chinese news agency that’s testing out AI news anchors to replace human staff.

The line between virtual and reality is becoming more and more blurred, and the growth in demand for deepfake porn is no exception. Today, many consumers don’t just want made-to-order porn of AI characters, but custom content of someone they know.

Related: Deepfakes Tech Makes It Possible To Create Fake Porn Videos Of Almost Anyone

The ability to digitally manipulate photos isn’t a new concept. But earlier this year the DeepNude app hit the market—making the AI technology used to create nude deepfake images available to anyone with a smartphone. That’s right—any user could upload an existing image of someone and see it transformed into pornographic content instantaneously.

In only a matter of months, over 500,000 users downloaded the DeepNude app. However, less than a day after the app started receiving widespread attention last month, its creators announced it was shutting down.

In a tweet, the team behind DeepNude shared, “We never thought it would become viral and we would not be able to control the traffic. We greatly underestimated the request…The probability that people will misuse it is too high… Surely some copies of DeepNude will be shared on the web, but we don’t want to be the ones who sell it.”

Their conclusion was that “the world is not yet ready for DeepNude,” implying that there will be a time in the future when the software is available to the masses. It’s only a matter of time.

Stop the demand for deepfake porn

As technology continues to advance, deepfakes will only become easier to generate and more difficult to detect. The fact that this tech has already been created and that so many people show interest is especially concerning.

Related: 7 Things You Can Do If You’re A Victim Of Deepfakes Or Revenge Porn

Consuming porn can be an escalating behavior and it’s clear that, at least for some, fantasies with strangers are no longer enough. Many want to custom order porn with a familiar face—like a favorite Hollywood actor, coworker, ex, family member, or anyone on social media— and this no longer requires a big budget or an experienced AI specialist.

Today, there’s really no limit to who can be exploited and consumed as porn. The porn industry continues to capitalize on advances in tech and make deepfake porn available to the masses—no matter who’s hurt in the process.

Our takeaway? Know what’s possible, and decide for yourself how much you want to share online, and what to believe when you see something questionable.

But regardless of if your Instagram is set to private or not, together, we can shed light on the reality of exploitation in the industry, and stop the demand that fuels the supply for deepfakes porn.

Your Support Matters Now More Than Ever

Most kids today are exposed to porn by the age of 12. By the time they’re teenagers, 75% of boys and 70% of girls have already viewed itRobb, M.B., & Mann, S. (2023). Teens and pornography. San Francisco, CA: Common Sense.Copy —often before they’ve had a single healthy conversation about it.

Even more concerning: over half of boys and nearly 40% of girls believe porn is a realistic depiction of sexMartellozzo, E., Monaghan, A., Adler, J. R., Davidson, J., Leyva, R., & Horvath, M. A. H. (2016). “I wasn’t sure it was normal to watch it”: A quantitative and qualitative examination of the impact of online pornography on the values, attitudes, beliefs and behaviours of children and young people. Middlesex University, NSPCC, & Office of the Children’s Commissioner.Copy . And among teens who have seen porn, more than 79% of teens use it to learn how to have sexRobb, M.B., & Mann, S. (2023). Teens and pornography. San Francisco, CA: Common Sense.Copy . That means millions of young people are getting sex ed from violent, degrading content, which becomes their baseline understanding of intimacy. Out of the most popular porn, 33%-88% of videos contain physical aggression and nonconsensual violence-related themesFritz, N., Malic, V., Paul, B., & Zhou, Y. (2020). A descriptive analysis of the types, targets, and relative frequency of aggression in mainstream pornography. Archives of Sexual Behavior, 49(8), 3041-3053. doi:10.1007/s10508-020-01773-0Copy Bridges et al., 2010, “Aggression and Sexual Behavior in Best-Selling Pornography Videos: A Content Analysis,” Violence Against Women.Copy .

From increasing rates of loneliness, depression, and self-doubt, to distorted views of sex, reduced relationship satisfaction, and riskier sexual behavior among teens, porn is impacting individuals, relationships, and society worldwideFight the New Drug. (2024, May). Get the Facts (Series of web articles). Fight the New Drug.Copy .

This is why Fight the New Drug exists—but we can’t do it without you.

Your donation directly fuels the creation of new educational resources, including our awareness-raising videos, podcasts, research-driven articles, engaging school presentations, and digital tools that reach youth where they are: online and in school. It equips individuals, parents, educators, and youth with trustworthy resources to start the conversation.

Will you join us? We’re grateful for whatever you can give—but a recurring donation makes the biggest difference. Every dollar directly supports our vital work, and every individual we reach decreases sexual exploitation. Let’s fight for real love: